D2D Cont'd - Where do we go from here?

The operating word of the year is "Tactical"

Late last year, investors seemed to get tired of the AI trade with many AI stocks selling off. More than anything fundamental, we see this largely as a rotation, a dose of caution where people are asking reasonable questions about the biggest trend in technology right now. Then, as earnings season rolled around, every company talked about the “insatiable demand” for AI. The challenge this year will be squaring those two opposing views.

For starters, the demand for AI compute looks set to continue to grow. People are finding ever more useful things to do with AI. We think the current craze for ‘agents’ like Clawd MoltBot OpenClaw shows a hint of AI applications that will actually be useful to a wide consumer audience. OpenClaw itself is probably a few iterations from being broadly adoptable, but it looks promising. There are also growing signs that the AI Labs are getting to the point where they can use AI to make AI better. Add to this advances in generational video, robotics and a few other almost-but-not-quite there use cases and we think it is safe to say there is strong long-term support for demand. That all being said, this demand could be non-linear with fits and starts along the way which measured in terms of technology adoption could be quite rapid, but in Street time could feel like forever. In particular, we think one large potential source of delay will be the need for the formation of new Internet business agreements. For instance, the interactions between AI agents and e-commerce sites is going to be fraught territory for the lawyers to negotiate.

This demand will support demand for all sorts of semis, but the actual mix of chips is still very much up for debate. For the moment, the scaling laws still hold, which supports the demand for ever more performant GPUs for training. Much of that market is going to go to Nvidia, but by 2027 with the AMD MI500 and possibly Google/Broadcom TPUs will be in contention for some share gains. Beyond that, a lot will depend on how AI will actually used. One of the interesting off-shoots of OpenClaw is the need for local compute – everyone running out and buying Mac Minis. This could actually spell a healthy demand for CPUs, maybe.

At this point we start to run into real constraints. TSMC capacity is essentially at full utilization. Memory fabs are past full utilization. It looks likely that these conditions will persist into 2027.Many of the companies actively participating in this build out have said they are “sold out” for the year.

We think the best way to approach these stocks this year will be to find companies which are somehow adding to the industry’s capacity in unexpected ways or companies whose full participation in the build-out has not yet entered numbers. We see these demand trends creating waves that fill up company’s capacity, and so we think there will be opportunity in finding companies where the wave is about to crest.

The first camp is populated by companies like Intel and Broadcom. The stock price chart for Nvidia and Broadcom look almost interchangeable; both are now seen as pure-play exposure to AI demand. However, Nvidia’s full outlook is now largely priced in with upside driven largely by their ability to negotiate more allocation from TSMC. By comparison, we think Broadcom has been hit by a dose of skepticism, but they have the ability to outperform relative to expectations given strong demand for Google TPUs. Moreover, the demand for foundry, especially packaging, has gotten so high that many customers are now looking at Intel in a more positive light. Intel’s foundry still has to face a long hill of learning, but better Intel’s low yield than TSMC’s zero allocation.

Then there is the large universe of companies who are poised to see significant growth from AI but have not yet seen the acceleration. This happened to memory last year, the demand wave inundated the entire sector. We think networking could see something similar this year. This will probably not be of the same magnitude but could still see some significant upside to earnings.

Networking covers a lot of territory. We have already seen strong results from a number of pure-play-ish AI networking companies. Credo and Astera Labs for instance have had this baked into their numbers for a while. Beyond that however, several other companies look set to gain. This ranges from optical components suppliers, laser makers all the way to network equipment makers. One of the most frequent questions we get is about Cisco’s participation in AI. That company missed the transition to hyperscale data centers, losing out to Arista to a small degree and to hyperscalers’ internally designed systems to a larger degree. Could they now stage a comeback with AI data centers? We have searched fairly widely and mostly found other people asking the same question. Hard to see how they could play here, but worth keeping an eye on them. We have mentioned SiTime several times in the past, and they look very well positioned here, especially with their recent acquisition of Renesas’s timing business, which could bring them into many more design sockets and customers. Another blast from the past is Nokia. As much as their core 5G market looks lackluster, we think their optical systems business could benefit as optical content grows in scale out and later scale up optical systems. They still have a lot of 5G reliance in their numbers, but optical for AI could be a surprise.

Finally, there are the data centers themselves. This looks like the most fragile component of the AI ecosystem. One of the biggest sources of concern in Q4’s sell-off was the increasingly complex financial engineering taking place in the funding of AI data centers and the growing amounts of debt that come with it. From what we can tell, the debt funding is likely to grow significantly this year. We are constantly learning of more neoclouds and more hyperscaler data centers emerging.

In particular, we think the neoclouds will be challenging stocks this year; challenging in the sense that their stock price performance is going to be hard to predict. There is a lot of skepticism around their business model, but there is also the potential for them to sign more megadeals with hyperscalers. At this point, long-term forecasting of their performance is essentially impossible. We think their earnings stream now resemble the proverbial snake swallowing an elephant – a giant bulge with the upcoming hyperscaler deals, falling off sharply when those deals end. That does not mean they are terrible investments, but the best approach to them this year will be highly tactical.

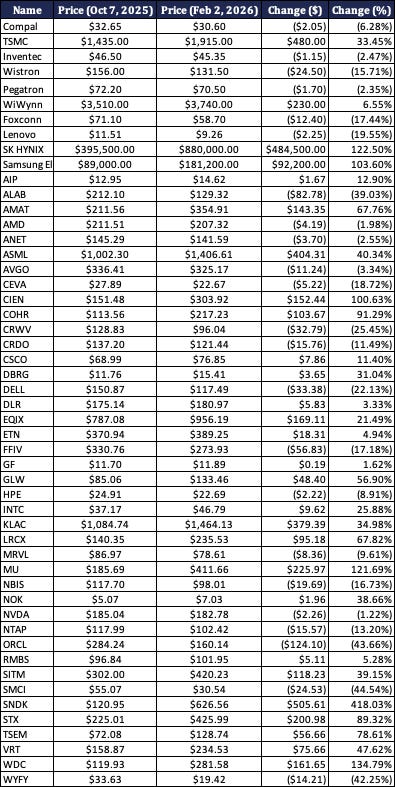

Finally, we want to revisit our Slightly Unhinged List of AI stocks from last October.

Jay’s Slightly Unhinged List of AI Stocks

Source: Factset

We cautioned that not all of these would do well, but at least we got the memory and storage names on there. We should also point out that we forgot to add Corning, which has done very well – all that AI is going to need a lot of optical fibers.

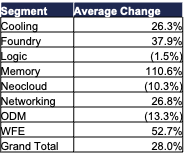

Looking at all those by segment, the comparison with a wave is clear. Spending on memory hit last year, as did spending to ease the capacity at fabs as evidenced by the Wafer Fabrication Equipment (WFE) spend. Logic traded sideways – as we noted many of those companies are maxed out on capacity.

Jay’s Slightly Unhinged List of AI Stocks by Segment

Source: Factset